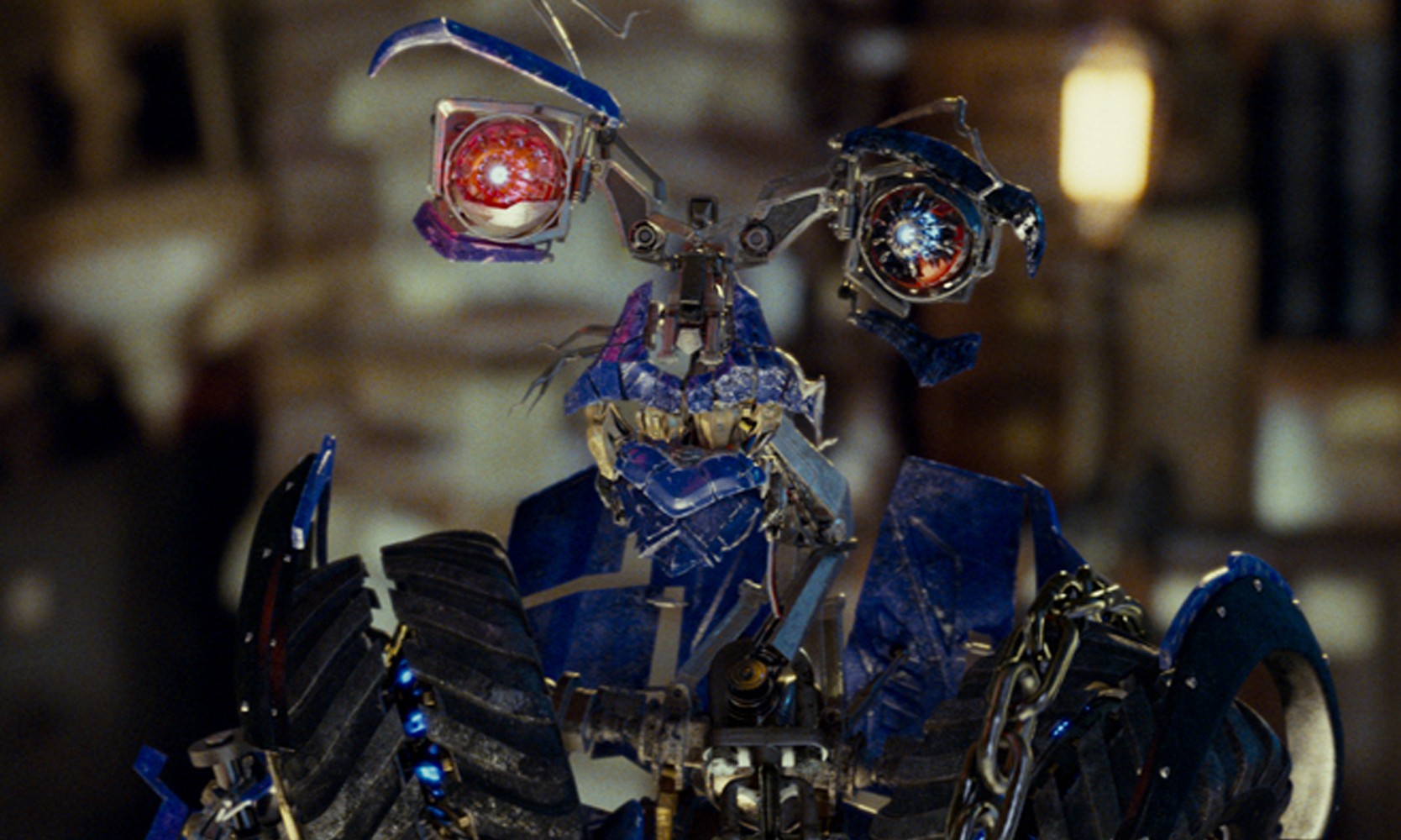

Optimus Prime and Bumblebee are Sam’s principal protectors. Sam, his girlfriend Mikaela Banes, and Sam’s parents are in danger. He’s wrong: the Decepticons need access to Sam’s mind to see some glyphs imprinted there that will lead them to a fragile object that, when inserted in an alien machine hidden in Egypt for centuries, will give them the power to blow out the sun.

A presidential envoy believes it’s because the Autobots are around he wants them gone. The week Sam Witwicky starts college, the Decepticons make trouble in Shanghai. Cast : Shia LaBeouf, Megan Fox, Josh Duhamel, Tyrese GibsonĪ youth chooses manhood.Full Name: Transformers 2 : Revenge of the Fallen.The game is a battleground where players incarnate as Transformers, thrust into a captivating.

Download Transformers: Revenge of the Fallen (2009) Dual Audio Full Movie ~ BollyFlix Movie Details : Like a high-octane fuel pumping through the veins of a Camaro that can’t wait to metamorphose into a robotic titan, the action game Transformers: Revenge of the Fallen beckons players to dive headfirst into its cosmic fray. The user is responsible for any other use or change codes. With about three million parameters, MobileViTv2 achieves a top-1 accuracy of 75.6% on the ImageNet dataset, outperforming MobileViT by about 1% while running $3.2\times$ faster on a mobile device.Source: Disclaimer: This plugin has been coded to automatically quote data from. The improved model, MobileViTv2, is state-of-the-art on several mobile vision tasks, including ImageNet object classification and MS-COCO object detection. A simple yet effective characteristic of the proposed method is that it uses element-wise operations for computing self-attention, making it a good choice for resource-constrained devices. This paper introduces a separable self-attention method with linear complexity, i.e. Moreover, MHA requires costly operations (e.g., batch-wise matrix multiplication) for computing self-attention, impacting latency on resource-constrained devices. The main efficiency bottleneck in MobileViT is the multi-headed self-attention (MHA) in transformers, which requires $O(k^2)$ time complexity with respect to the number of tokens (or patches) $k$. Though these models have fewer parameters, they have high latency as compared to convolutional neural network-based models.

Abstract: Mobile vision transformers (MobileViT) can achieve state-of-the-art performance across several mobile vision tasks, including classification and detection.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed